Cluster analysis is a set of techniques or methods which are used to classify objects, cases, figures into relative groups. These related groups are further classified as clusters. Cluster analysis is also known by the name of numerical taxonomy or classification analysis. In cluster analysis, there is no information directly related to groups or clusters. It is used for various purposes in marketing products to specific targets.

To understand cluster analysis, we need to know what is clustering? Clustering is a process of grouping objects into classes or groups. This grouping or clustering can be done based on the data similarity or relation between the objects. Labelling can also be done to the groups so that the group can be easily identified or used in a number of ways.

Why Do We Need Cluster Analysis

Cluster analysis can be used to identify homogeneous groups of potential customers/buyers based on the previous purchase history of the product. Cluster analysis basically involves formulating one problem, selecting its approach and selecting a clustering algorithm. After deciding the number of clusters or groups, you can look at the final data which is beneficial for marketing or business purposes.

Algorithms in Cluster Analysis

Cluster analysis uses many different types of clustering techniques/algorithms to figure out the final outcome. It is different than data processing but can be considered as a step depending on its use. Cluster techniques/algorithms can be classified based on their separating cluster model. In this overview, we are going to look at some of the prominent examples of cluster analysis.

- Connectivity-based clustering aka hierarchical clustering: Hierarchical clustering is based on the concept of objects being related to their nearby entities then those are far away. The algorithm connects objects to form clusters purely based on distance relationships. A cluster can be defined based by a maximum distance. By this, different clusters will be formed based on different distances. This will produce a series of hierarchical clusters based on data distance which we can represent through a dendrogram. In a dendrogram, distance is marked by the y-axis and x-axis has objects placed. This arrangement prevents mixing of clusters.

- Centroid-based clustering: In centroid-based clustering, data groups are represented by a centra vector point, which may or may not be a part of a data group. When these numbers of clusters are fixed to k, it gives a formal definition of an optimization problem. After finding the cluster centers, you can assign the objects to the nearest data group and so on. This will create a centroid-based clustering graph.

- Distribution-based clustering: This cluster distributing model is based on distributing data results. The theoretical foundation of these methods is surely excellent but lacks practicality as it suffers from overfitting problem. In order to get a hard cluster of data, then we need to assign to the Gaussian distribution method. However, for soft data clusters, it is not necessary.

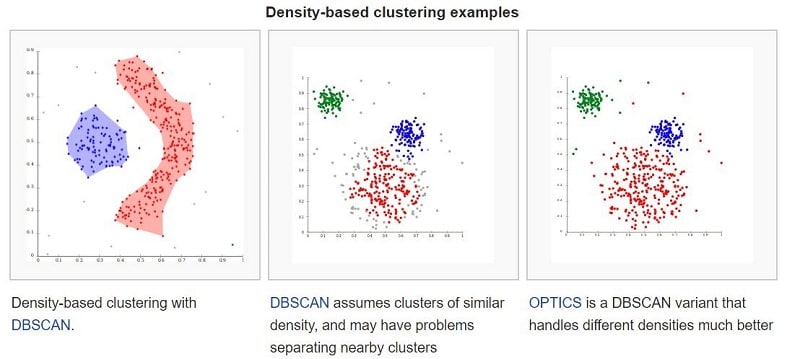

- Density-based clustering: In density-based clustering, clusters are defined in such as way that data with high density are taken into consideration. The most popular density-based data clustering method is known as DBSCAN where it deals with density reachability. Here we make data groups based on connected density objects which can form different shapes. However, this data clustering method requires a density drop in order to detect variation. So this method doesn’t work when applied to same density data groups.

Evaluation and assessment

Evaluation or validation of results is difficult as clustering data itself. There are many techniques to evaluate data from a cluster or group. Down below we have two basic cluster evaluation methods.

Internal evaluation

When the evaluation of cluster groups is based on the data which was clustered, this process is known as internal evaluation. This method assigns the best score to the cluster producing algorithm on the factor of high and low similarity between data clusters. This result does not necessarily show effective information in retrieval applications. Moreover, internal evaluation is based on an algorithm that uses the same cluster model.

External evaluation

In the external evaluation of data clusters, results are calculated based on data which was not utilized before for clustering. Such data groups formed are called class labels. These consists of a set of pre-defined items or data values, which are previously defined by experts. External evaluation is one of the best measures to check the close relationships between different clusters.

Related: Data Processing Methods, Types of Data Processing

Applications Of Cluster Analysis

Cluster analysis is used in many applications including pattern recognition, marketing research, image processing and data analysis. It can also help marketers and influencers to discover target groups as their customer base. After that, it can characterize these groups based on a customer’s purchasing patterns. It also helps with data presentation and analysis.

Clustering analysis also helps in the field of biology. It can be used to categorize genes, derive animal and plant taxonomies and can gain insights into various structures and inherent population patterns.

It can also help in the detection of land use in an area. One can also identify groups of houses in a particular city based on geographical location, house type, value etc. Clustering also helps to organize and classify documents for certain information discovery. Clustering can also be used in detection and protection from fraud activities such as detection of credit card fraud.

Requirements of Clustering in Data Mining

Clustering helps in many ways to make the most of our of grouped data. Now we have large databases which we need to deal with on a daily basis. Down below are few basic requirements of a clustering process in data mining.

- Ability to deal with different kind of attributes: As we have a large set of data values, the distribution algorithms should be capable of working with all kind of clusters to such interval-based data or categories.

- Discovery of clusters with attribute shape: The clustering algorithm should be capable of detecting a different set of clusters from their arbitrary shape.

- High Dimensionality: Each data set/group have low dimensional data as well as high dimensional data. So the clustering algorithm should be able to operate on both the data sets.

- Ability to deal with noisy data: Databases contain many noisy elements. Sometimes even mission an enormous set of data. This creates a nuisance while creating data sets. Some algorithms are sensitive to such data sets and may lead to wrong results.

Techniques used in cluster analysis

- Artificial Neural Network

- Nearest Neighbour Search

- Neighbourhood components analysis

- Latent Class Analysis

- Affinity Propagation

Related: Data Processing Cycle, Information Processing Cycle

Tags: Data Matrix, Two Modes Object, Supervised Machine Learning, Mean Absolute Deviation, asymmetric binary variables, one mode object, using mean absolute, two data objects, symmetric binary variables, method simple matching, weighted formula, data point, data science, machine learning, data objects, one cluster, euclidean distance, binary data, interval scaled variables, variable structure