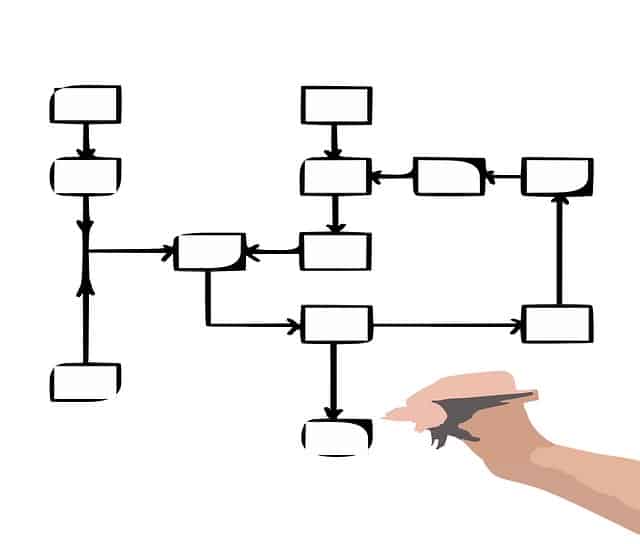

A decision tree is similar to a flowchart resembling a tree structure including an internal mode that represents attributes. The branches portray decision rules and the leaf nodes depict the outcome. The node in the topmost position is known as the root node in a decision tree. It aids in partitioning on the aspects of the attribute value. The partition of the tree is known as the recursive partitioning. This flowchart-like framework assists one with decision making. This process is easy to interpret and understand due to its diagram that imitates the level of human thought processes.

The decision tree happens to be a white box kind of ML algorithm. It offers internal decision-creating logic which is unavailable in the Neural network, a black box kind of algorithm. It offers swifter training time in comparison to the Neural network. The time convolution of decision trees is the purpose of the amount of number and records of aspects of the provided data. Moreover, the decision tree is a non-parametric or distribution-free procedure that is independent of the probability of distribution assumptions. It can handle great dimensional data with apt accuracy.

How to utilize SciKit decision tree accuracy?

- Bring in the model that you will utilize. Here all models are executed as python classes.

- Create an example of the model, where max_depth=2 to pre prune the tree to ensure that it has a depth below 2.1.

- Now on the data, train the model. The model will know the link between Y (species of the iris) and x(sepal width, sepal length, petal width, and petal length).

- Finally, predict labels of test data that is unseen.

How to tune the trees’ depth?

You can tune the model by seeking the premium value for max_depth. But it should be remembered that max_depth is contrary to the depth of the decision tree. Thus, if a tree has been fully pure at the depth then it will discontinue to splitting.

What are the advantages of this process?

- This process makes it easier for us to visualize and interpret.

- It permits one to calculate the feature significance which happens to be the entropy reduction due to split or the absolute amount of Gini index of a provided feature.

- Non-linear designs are easily captured.

- Not much data is required from the user. You will not require to normalize columns.

- It has no presumption regarding the distribution due to the algorithm’s non-parametric nature.

- It is apt for variable selection and prediction of missing values.

What are its disadvantages?

- The minuscule variance in the data can offer a distinct decision tree. Although it can be decreased by boosting and bagging algorithms.

- It is partial to the imbalance dataset. Hence, balance the datasets before making the decision tree.

- It is sensitive to loud data and may overfit them.

Thus, decision trees may be utilized as regression models or classifiers. A structure of the tree is made that splits the dataset into minuscule subsets and results in predictable. There are leaf nodes that offer predictions that may be attended by crossing simple IF, AND, AND/ THEN logic throughout the nodes and the nodes of the decision that divides the data.