As the use of artificial intelligence (AI) continues to grow, the need for businesses to ensure compliance in the development and deployment of AI-based products is becoming increasingly important. This blog post will provide 10 steps that businesses should take to ensure they are compliant with all applicable regulations when developing and deploying AI products. By following these steps, businesses can avoid potential violations and protect themselves from costly penalties. This post will precisely cover topics such as what compliance requirements exist for AI-based products, how to develop a compliance strategy, and the steps needed to keep an AI-based product compliant.

1) Understanding Compliance in AI Development

The development of artificial intelligence (AI) has brought numerous opportunities for innovation, but it has also come with regulatory compliance challenges. Compliance in AI development is about ensuring that AI products are developed in accordance with legal, ethical, and technical standards, as well as being respectful of the rights and dignity of all stakeholders.

As businesses race to create more sophisticated AI technologies, there is a growing concern about potential violations. It’s essential that companies precisely ensure they adhere to compliance rules to avoid the legal, financial, and reputational consequences that come with noncompliance.

Understanding compliance in AI development requires an understanding of the numerous legal and ethical considerations that come with creating these complex systems. This includes data privacy, fairness, bias, and accountability. The development process of AI must ensure compliance throughout the entire process, including the collection of data, development, testing, and deployment.

Companies need to consider the standards that must be met throughout the entire AI development cycle. Compliance starts with ensuring that AI technology aligns with legal and regulatory requirements. AI systems must also respect individual privacy rights, and the data collected and analyzed must be ethically used. Companies must remain mindful of possible compliance risks that arise during each stage of the AI development process.

As the AI landscape continues to grow, businesses must not only stay ahead of emerging technology, but they must also stay up to date with changing legal and regulatory requirements. It is critical to ensure that the AI development process stays compliant while also staying focused on creating products that offer valuable, impactful benefits for all stakeholders involved.

2) Identifying Potential Compliance Violations in AI

The development of AI technology is becoming increasingly popular across various industries, from healthcare to finance. However, as AI becomes more complex and sophisticated, so too do the compliance issues surrounding it. Companies must be mindful of potential compliance violations that may arise during the development of AI products and take steps to mitigate these risks.

One of the first steps in precisely avoiding compliance violations in AI development is to identify potential issues. There are a variety of factors that can contribute to compliance risks, including data privacy, ethical considerations, and regulatory compliance. Companies must be aware of these issues and proactively address them during the development process.

Data privacy is a major concern in the development of AI products. Companies must ensure that they are collecting and storing data in a secure manner, with appropriate consent and transparency for the individuals involved. Failure to do so can lead to costly penalties and damage to a company’s reputation.

Ethical considerations are also important in the development of AI products. Companies must be mindful of the potential impact that their products may have on society and take steps to avoid harm. This may include building in safeguards against bias, ensuring that data is representative of diverse populations, and developing ethical frameworks for decision-making.

Finally, regulatory compliance is a key consideration for companies developing AI products. Different industries may be subject to different regulations, and it is important to stay up-to-date on any changes or new requirements. This may include ensuring compliance with data protection regulations such as GDPR or HIPAA, or meeting ethical standards outlined by professional organizations.

By identifying potential compliance violations in AI development and taking steps to mitigate these risks, companies can ensure that their products are not only effective and innovative, but also ethical and compliant. This requires a commitment to ongoing monitoring and risk assessment throughout the development process. Ultimately, the success of AI technology depends on its ability to operate within ethical and regulatory guidelines, while still providing valuable benefits to society.

3) Ensuring Ethical AI Development

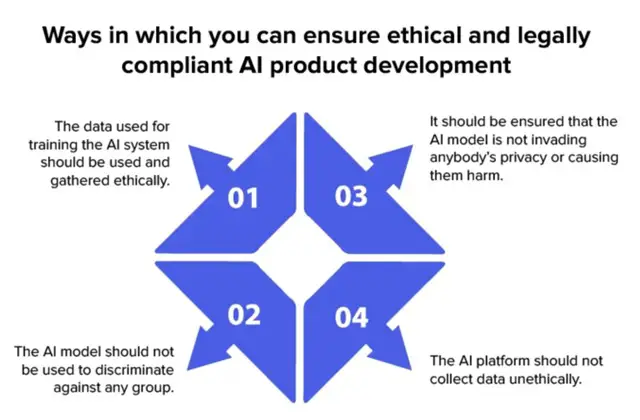

As AI becomes more advanced and integrated into our daily lives, it’s important to prioritize ethics and moral considerations during its development. Ensuring ethical AI development means creating products that benefit society while mitigating any negative impacts.

AI ethics refers to the ethical principles that should guide the development and deployment of AI technology. AI ethics includes considerations such as transparency, accountability, fairness, and responsibility. These principles ensure that AI products are designed and deployed in ways that benefit society and minimize any potential harm.

Ethical AI development requires a multifaceted approach that involves collaboration between developers, regulators, and other stakeholders. Developers should incorporate ethical considerations into the design process, ensuring that AI algorithms and systems are transparent and explainable. This ensures that AI operates in a manner that is predictable and trustworthy.

In addition to designing AI with ethical considerations in mind, developers should also be proactive in mitigating any potential risks associated with AI development. This includes conducting ongoing risk assessments and developing plans to address any potential ethical issues that arise.

Therefore, ensuring ethical AI development requires a commitment to transparency, accountability, and a willingness to collaborate with stakeholders to create products that benefit society. By prioritizing ethics during AI development, we can create a better future for everyone.

4) Steps to Avoid Compliance Violations in AI Development

Developing artificial intelligence (AI) products can be a daunting task, especially when compliance and ethics are at stake. To ensure that your AI development efforts are in compliance with legal and ethical standards, consider the following ten steps:

- Clearly define your goals and objectives before embarking on AI development.

- Assess and manage risks associated with AI development and deployment. Make sure that you have a plan in place for mitigating potential risks, such as unintended consequences and security breaches.

- Incorporate privacy and data protection measures into your AI development processes. Ensure that your AI systems do not compromise individuals’ privacy or collect unnecessary personal information.

- Train your employees on compliance and ethics in AI development. Ensure that they understand the importance of adhering to compliance regulations and ethical standards in AI development.

- Document your compliance efforts in AI development. Keeping a detailed record of your compliance processes and practices can help you avoid violations in the future.

- Regularly review and update your AI development processes to ensure continued compliance. Keep up-to-date with changes in regulations and industry best practices, and make adjustments as necessary.

- Collaborate with experts in compliance, ethics, and AI to stay ahead of potential violations and address emerging ethical issues.

- Implement quality control measures to ensure that your AI products function as intended and comply with applicable regulations.

- Consider using a compliance framework, such as the General Data Protection Regulation (GDPR) or the ISO/IEC 27001 standard, to guide your AI development processes.

- Regularly monitor your AI systems for compliance violations, and promptly address any issues that arise. This includes monitoring for any potential bias in your AI systems that could unfairly impact individuals or groups.

By following these ten steps, you can help ensure that your AI development efforts remain in compliance with legal and ethical standards, and avoid potential violations and reputational damage. Remember, it’s crucial to build trust with your customers, stakeholders, and regulatory agencies, and complying with laws and ethical standards is a crucial aspect of building that trust.

5) Conducting Risk Assessments in AI Development

As with any new technology, there are inherent risks associated with developing AI products. Conducting a risk assessment is critical to ensuring that compliance violations do not occur during the development process. It involves evaluating the potential risks associated with the technology and assessing the likelihood and impact of each risk.

To begin the risk assessment process, it’s important to assemble a team of experts in the relevant fields, including technology, law, and ethics. Together, the team can identify potential risks and analyze the potential consequences of each risk.

During the assessment process, it’s important to consider the following risks:

- Bias: AI can learn and replicate the biases of its creators and data sources, resulting in discriminatory outcomes.

- Privacy: AI can process sensitive data that may be protected by data privacy regulations, such as GDPR or CCPA.

- Security: AI can be susceptible to cyber-attacks, leading to unauthorized access or misuse of data.

- Legal: AI can be subject to legal regulations, such as intellectual property or consumer protection laws.

Once the risks have been identified precisely, it’s essential to determine the likelihood and impact of each risk. The team should evaluate the likelihood of each risk occurring and the potential severity of the consequences.

After assessing the risks, it’s necessary to prioritize them based on the likelihood and impact. The highest-priority risks should be addressed first, and measures should be implemented to mitigate the risks. The risk assessment process should be ongoing, with the team continually monitoring and reassessing the risks associated with AI development.

By conducting a thorough risk assessment, the team can identify and mitigate potential compliance violations before they occur. It ensures that the development of AI products is ethical, compliant, and secure, leading to increased trust and confidence in the technology.

6) Incorporating Privacy and Data Protection Measures

As AI becomes more ubiquitous in our daily lives, the need for protecting personal information has become paramount. Developers must prioritize privacy and data protection measures in their AI development process. This not only helps maintain compliance with legal and ethical standards but also ensures the protection of individual rights.

The first step in incorporating privacy and data protection measures is identifying the personal data that AI will handle. This may include personally identifiable information, financial data, medical information, and other sensitive data types. Once this is identified, measures should be put in place to restrict access to this data, and protect it through encryption, access controls, and data storage practices.

Data should be kept only for as long as necessary, and securely destroyed when no longer needed. Developers should also consider data anonymization and de-identification techniques to help prevent accidental exposure of personal information.

It is also essential to ensure transparency in AI processing. Users should be informed about the types of data AI will collect, how it will be used, and who will have access to it. This is crucial for establishing trust and compliance with regulations such as the General Data Protection Regulation (GDPR).

Lastly, developers must consider the potential impact of AI processing on individual rights. They should create algorithms that take into account fairness and discrimination prevention, while providing transparency and explaining any automated decision-making processes.

Incorporating privacy and data protection measures can seem daunting, but it is crucial for ethical AI development. By following these measures, developers can build trust and establish compliance with regulations and standards while protecting individual rights.

7) Training Employees on Compliance and Ethics in AI Development

When developing AI products, ensuring compliance with ethical standards and regulations is crucial. This involves more than just creating a checklist of what needs to be done – it requires an understanding of what is expected, why it is important, and how to achieve compliance in practice. One of the most effective ways to achieve compliance is to ensure that employees who work on AI development are knowledgeable and trained on compliance and ethics.

Training employees on compliance and ethics in AI development should be an ongoing process that involves regular updates on regulatory changes and emerging ethical issues. Employees should understand the importance of compliance in ensuring that the company meets regulatory requirements, mitigates risks, and maintains customer trust. Moreover, they should be aware of the impact their work can have on society and the ethical considerations that should guide their decision-making.

Training programs should also address specific compliance and ethical issues relevant to AI development. This includes training on data protection and privacy regulations, as well as best practices for designing ethical AI systems. It may also include scenarios or case studies that demonstrate how compliance violations can occur and what actions can be taken to prevent them.

In addition to formal training, it is important to create a culture that promotes ethical behavior and compliance. Employees should feel empowered to report concerns or potential violations without fear of retaliation. Managers and executives should lead by example and communicate the importance of compliance throughout the organization.

By training employees on compliance and ethics in AI development, companies can reduce the risk of compliance violations and ensure that their AI products are designed with ethical considerations in mind. It also helps to create a culture that promotes compliance and ethical behavior, which is crucial in an industry that is rapidly evolving and can have significant impacts on society.

8) Documenting Compliance Efforts in AI Development

As the use of artificial intelligence (AI) becomes increasingly widespread, it’s more important than ever for developers to document their compliance efforts. Proper documentation not only ensures legal compliance but also shows a commitment to ethical and responsible AI development.

Documentation should include a clear record of the steps taken to identify and address potential compliance violations, such as ensuring fair and non-discriminatory outcomes and protecting user privacy. It’s also important to document the results of risk assessments and any measures taken to address identified risks.

In addition, employee training on compliance and ethics should be documented to demonstrate a culture of compliance throughout the organization. This training should be ongoing, reflecting the ever-changing landscape of AI and the regulatory environment.

Documenting compliance efforts is not only important for legal compliance, but it also builds trust and credibility with stakeholders, including customers, investors, and regulatory bodies. By being transparent about compliance efforts, developers can show a commitment to responsible and ethical AI development.

Precisely, documenting compliance efforts should be seen as an integral part of the AI development process. It helps ensure legal compliance, fosters a culture of ethics and responsibility, and builds trust and credibility with stakeholders.

9) Monitoring for Compliance Violations in AI Deployment

Even with all the necessary steps taken to ensure compliance in AI development, there is still a risk of violations occurring during deployment. Therefore, monitoring for compliance violations is crucial to ensure that AI products are used ethically and in line with regulations.

This process precisely requires constant vigilance and attention to detail. It involves monitoring the AI system for any irregularities or violations and taking prompt corrective action when necessary. This includes monitoring the use of sensitive data and ensuring that the AI system is being used for its intended purpose.

It is also essential to monitor how users interact with the AI system. This can help detect any misuse of the system and provide insights into how it can be improved. In addition, monitoring the output of the AI system can help identify any biases or errors that may be occurring.

Regular auditing of the AI system is another critical component of compliance monitoring. Audits should be conducted at regular intervals to ensure that the AI system is functioning correctly and in compliance with regulations.

By closely monitoring the AI system, companies can detect compliance violations early and take corrective action before any harm is done. This will help to protect both the company and its customers, ensuring that the use of AI technology is ethical and complies with all regulations.

Conclusion

In conclusion, compliance violations in AI development can have serious consequences for both businesses and consumers. It’s crucial to prioritize compliance and ethics in the development of AI products to ensure their reliability and trustworthiness. By following the 10 steps outlined in this article, businesses can mitigate compliance risks and create responsible AI products.

To further support compliance efforts, businesses can consider hiring software developers in India. India is known for its highly skilled and experienced software developers who can provide quality AI development services at competitive rates. Moreover, working with Indian developers can offer businesses access to diverse expertise and perspectives that can further strengthen their compliance efforts.

Therefore, prioritizing compliance and ethics in AI development is essential to building trustworthy and reliable products that can drive long-term business success. By following the steps outlined in this article and hiring top-quality software developers in India, businesses can ensure compliance in their AI development efforts and maintain the trust of their consumers.

FAQs

Q: What are some common compliance violations that can occur during AI development?

A: Some common compliance violations include precisely using biased data sets, failing to obtain proper consent for data usage, and violating data privacy laws.

Q: Why is it important to incorporate privacy and data protection measures in AI development?

A: Incorporating privacy and data protection measures not only helps to ensure compliance, but it also helps to build trust with users and stakeholders. It shows that the development team values the privacy and security of user data.

Q: How can employee training help prevent compliance violations in AI development?

A: Employee training can help to raise awareness of compliance regulations and ethical considerations. By providing employees with the knowledge and tools they need, they can make informed decisions and avoid unintentional violations.

Q: How can risk assessments be conducted in AI development?

A: Risk assessments involve identifying potential risks and evaluating their likelihood and potential impact. This can be done by reviewing laws and regulations, identifying potential bias in data sets, and analyzing potential security risks.

Q: What should be done in the event of a compliance violation in AI deployment?

A: If a compliance violation occurs, it is important to take immediate action to remedy the situation. This may involve ceasing deployment of the AI product, notifying stakeholders of the violation, and implementing corrective measures to prevent future violations. It is also important to document the violation and any actions taken in response.